- [ ] Stanford CS231n lnik

[ ] Blogs:

[ ] Zeiler, Matthew D. and Fergus, Rob. Visualizing and understanding convolutional networks. In ECCV, 2014. Paper Link

- Pick a single intermediate neuron

- Compute gradient of neuron value with respect to image pixels

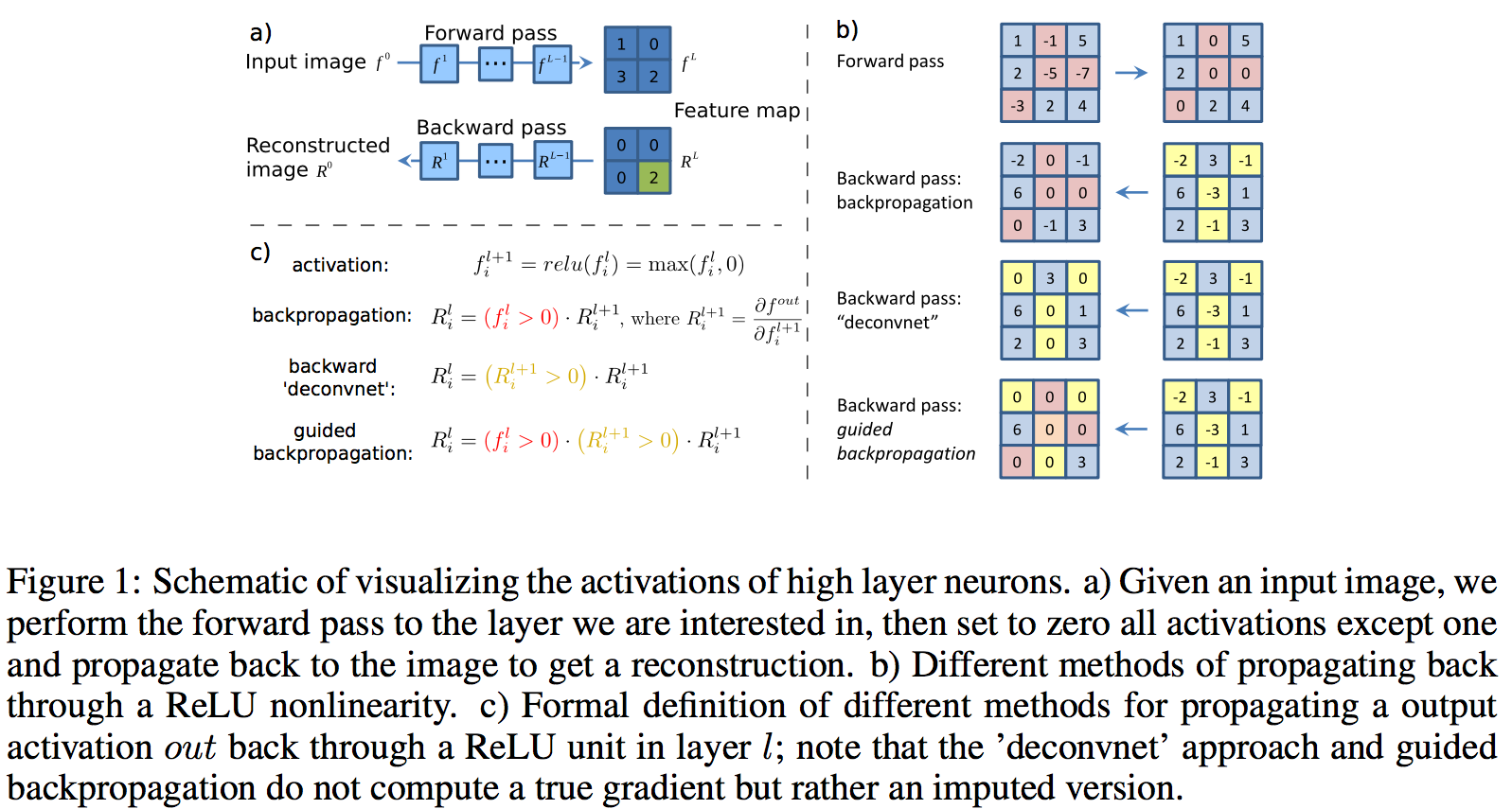

[x] Gradient visualization with guided backpropagation | Deconvolution J. T. Springenberg, A. Dosovitskiy, T. Brox, and M. Riedmiller. Striving for Simplicity: The All Convolutional Net Paper link

- Only backprop positive gradients through each ReLU

- Only backprop positive gradients through each ReLU

- [x] Gradient Ascent | Gradient visualization with saliency maps

K. Simonyan, A. Vedaldi, A. Zisserman. Deep Inside Convolutional Networks: Visualising Image Classification Models and Saliency Maps,

Paper Link

- (Guided) backprop VS Gradient Ascent:

- (Guided) backprop: Find the part of an image that a neuron responds to

- Gradient Ascent: Generate a synthetic image that maximally activates a neuron

- Gradient Ascent:

- Initialize image to zeros

- Repeat part: Forward image to compute current scores

- Repeat part: Backprop to get gradient of neuron value with respect to image pixels

- Repeat part: Make a small update to the image

- (Guided) backprop VS Image-specific Saliency Map

- Saliency Map : (Guided) backprop from one-hot class to image and compute saliency

- (Guided) backprop VS Gradient Ascent:

- [x] Guided, gradient-weighted class activation mapping

R. R. Selvaraju, A. Das, R. Vedantam, M. Cogswell, D. Parikh, and D. Batra. Grad-CAM: Visual Explanations from Deep Networks via Gradient-based Localization,

paper Link

- CAM: Class Activation Mapping

- The weights learned in the last tensor product layer correspond to the neuron importance weights, i.e. - importance of the feature maps for each class of interest.

- needs a simplified architecture, and hence has to be trained again

- Grad-CAM heat-map is a weighted combination of feature maps, but followed by a ReLU: weights: global average pooling of the feature map.

- CAM: Class Activation Mapping

Evaluation Methods

- Region Perturbation / Occlustion

- Deconv: Occlusion Experiments: Occlude a small square patch of the input image and then apply the network to the occluded image. Sum the responses (from one channel in the layer 5.)

- Evaluating the Visualization of What a Deep Nearal Network Has Learned:

- Region perturbation for ordered heatmap position: How much score is decreased by the region perturbation

- Grad-CAM: Faithfulness: the ability to accurately explain the function learned by the model.

- Grad-CAM++: Defined some metrics:

- The capability of an explanation map in highlighting regions: occlude the parts in the unimportant region

- Average confidence drop percentage

- percentage of number of samples which confidence increase

- RISE: Randomized Input Sampling for Explanation of Black-box Models: probability AUC curves for deletion and insertion

- Injecting noise

- Rotating and translating the image

- Object Localization / Segmentation

- CAM:

- Localization Evaluation: (ILSVRC2014) bbox generation: simple thresholding by 20% of the max value and take bbox of the largest connected component. Code

- GRAD-CAM:

- 15% of the max value as threshold

- weakly supervised segmentation

- Pointing-GAME: (Top-down Neural Attention by excitation Backprop)

- Grad-CAM++:

- Design a IoU over the area of bounding box and the heatmap

- CAM:

- Human Trust:

- Grad-CAM: class discrimination

- Grad-CAM++

- Learning from Explanation: Knowledge Distillation:

- Grad-CAM++: Define student-teacher network by introducing interpretability loss, instead of or combine with the traditional distillation loss. The interpretability lossis the square of the distance between the CAM of the teacher net and the student net.

- Diagnosing the image classification CNNs:

- Grad-CAM:

- Analyzing failure modes for CNNs

- Effect of adversarial noise for CNNs

- Identifying bias in dataset

- Grad-CAM:

- Counterfatual Explanations:

- Grad-CAM

- Applications:

- CAM:

- discovering informative objects in the scenes: SUN dataset

- Concept localization in weakly labeled images: hard-negative mining

- Weakly supervised text detector

- interpreting visual question answering

- Grad-CAM:

- Image Captioning

- Visual Question Answering

- Grad-CAM++:

- Image Captioning

- 3D Action Recognition

- IntegratedGrad:

- Retinopathy Prediction

- Chemistry Models

- CAM:

- Class-specific Units: CAM, still need to read